Convex Optimization: Part 1

Convex optimization is a method that finds the optimal solution by modeling a problem as a smooth landscape where every step in the right direction leads closer to the best answer. Imagine a crawler robot tasked with reaching the summit of a gently curved, dome-shaped mountain: because the terrain continuously slopes upward without any hidden dips or traps, the robot can reliably follow the gradient of the slope, taking small steps that always bring it higher until it reaches the top. Similarly, in convex optimization, the problem is shaped so that if you make a local improvement, you’re assured that you are moving toward the overall best solution. Another intuitive example is tuning a machine for peak performance, by making gradual, systematic adjustments and measuring improvement, you can confidently zero in on the setting that maximizes efficiency.

Convex optimization is a versatile tool used across countless fields, ranging from engineering and computer science to economics and biology, among others. Whether it’s fine-tuning financial portfolios, optimizing power distribution in smart grids, enhancing image quality in digital cameras, or calibrating control systems in robotics, convex optimization provides the reliable, step-by-step framework that ensures the best possible outcome.

In this blog series, we'll explore:

- How to determine if a function is convex.

- Properties and operations that preserve convexity.

- Standard forms of convex optimization problems.

- The Karush-Kuhn-Tucker (KKT) conditions: what they are and when they apply.

- Mathematical derivations, with an SVM-like example and various convex loss functions.

- A broad survey of commonly used convex functions in optimization.

Series Division:

- Part 1: Fundamentals, Convexity Criteria, and Examples.

- Part 2: Duality, KKT Conditions, and Advanced Applications.

- Part 3: Convex Loss Functions.

1. Identifying Convex Functions

A function is convex if and only if, for all and all ,

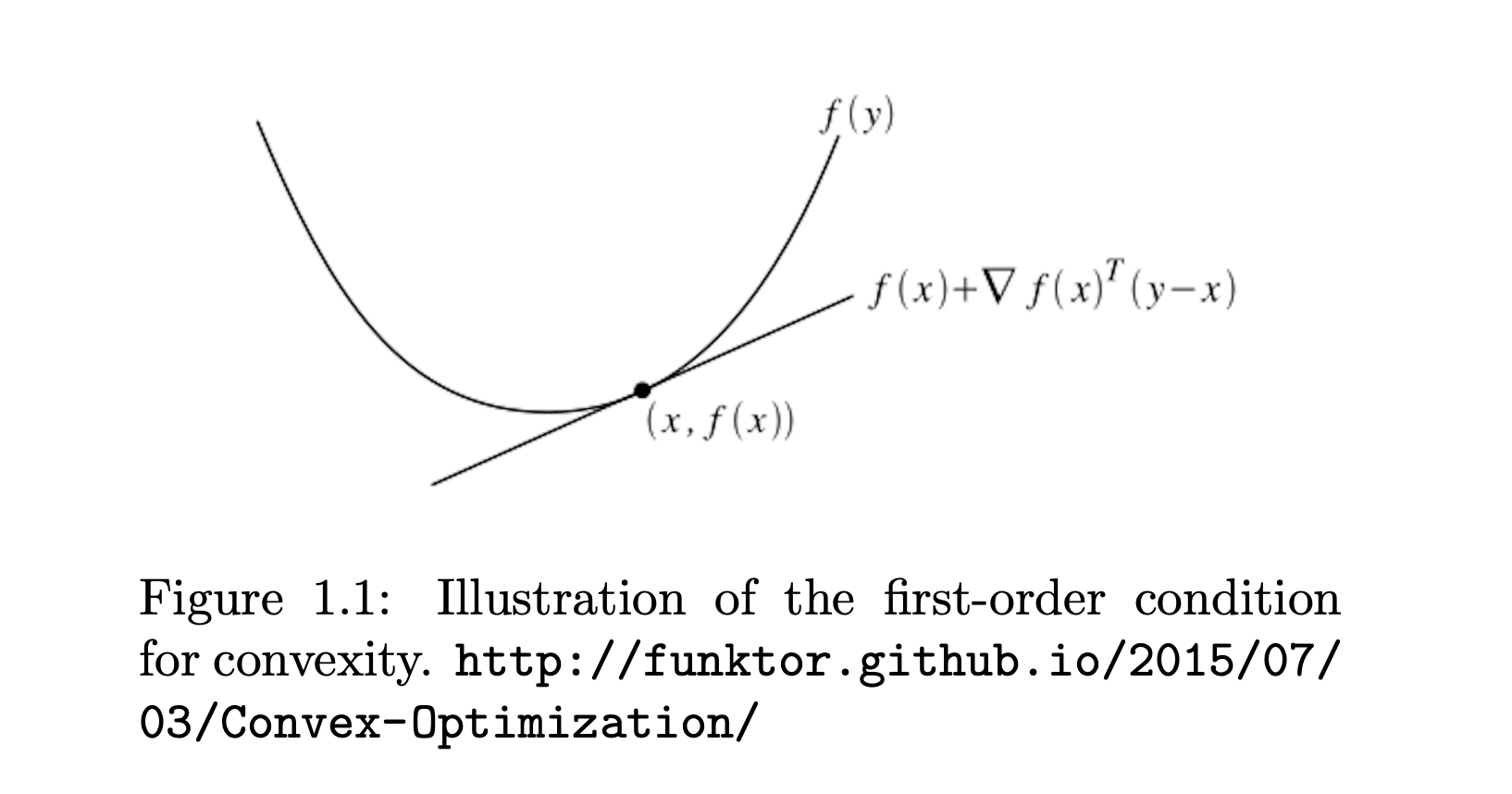

1.1 First-Order and Second-Order Criteria

-

First-Order Test (if is differentiable):

This means the function always lies above all its linear (first-order) approximations. We can draw a tangent line at any fixed point x such that . The tangent line always lies below the convex function .

-

Second-Order Test (if is twice differentiable):

i.e. the Hessian is positive semidefinite at every point .

A matrix (typically symmetric) is positive semidefinite (PSD) if for every vector the quadratic form is greater than or equal to zero.

How to check:

- Eigenvalue Method: Compute the eigenvalues of . If all eigenvalues are nonnegative, is PSD.

- Principal Minors: For small matrices, you can also check that all principal minors (determinants of the top-left submatrices) are nonnegative.

This means that no matter which direction you “test” the matrix with a vector, the resulting value will not be negative.

Below are some important examples demonstrating these criteria in action—some are convex, one is not.

1.2 Preserving Convexity

Convex functions are preserved under certain operations. Here are some key closure properties:

-

Nonnegative Weighted Sum: If are convex and , then is convex.

-

Affine Transformation: If is convex, then is convex for any matrix and vector .

-

Pointwise Maximum: If are convex, then is convex.

-

Composition with a Nondecreasing Convex Function: If is convex and nondecreasing in each argument, and is convex with nonnegative components, then is convex.

2. Examples

2.1 Quadratic Function

Consider the univariate quadratic

or the multivariate version

where (positive semidefinite).

Step-by-step check:

- Gradient (multivariate):

- Hessian (multivariate):

- Because we have Hence, is positive semidefinite for all implying convexity.

Interpretation: Quadratics with a PSD coefficient matrix are "bowl-shaped," never curving downward.

2.2 Log-Sum-Exp

Define

Step-by-step check (Hessian test):

-

Gradient of :

If we let , thenSo the gradient is

-

Hessian of :

For ,where and is if and otherwise.

More compactly, one can show

which is known to be positive semidefinite. (It is a covariance-like structure because it can be viewed as the Hessian of a log-sum-exp function.)

Thus, , so is convex.

Interpretation: The log-sum-exp function is a smooth approximation of and is widely used in machine learning (e.g., log-partition function).

2.3 Euclidean Norm ()

Consider

Step-by-step check (first-order or second-order approach):

-

Second-Order is trickier at due to non-differentiability there, so we often use a more general property: norms are always convex.

- Geometric argument: The unit ball of is a circle/sphere, which is convex. Hence is convex because it's the Minkowski functional of a convex, symmetric set.

-

Subgradient at :

which does not contradict convexity (though strictly speaking, a subgradient approach is needed at ).

Thus, the Euclidean norm is convex.

General fact: is convex for all .

2.4 Non-Convex Example:

Consider (univariate, for simplicity).

Step-by-step check:

- First derivative:

- Second derivative:

To be convex, we would need for all or at least not be negative anywhere. But is sometimes positive, sometimes negative. In fact, for , indicating is concave in that region. Around we have which doesn't guarantee convexity. Overall, is not convex over .

Interpretation: oscillates, so it curves up and down infinitely often, violating any global convexity requirement.

Notes

- Quadratic + PSD → Convex.

- Log-sum-exp: Strictly convex, as it has a positive semidefinite Hessian.

- ℓ₂-norm: Convex (more generally, ℓₚ-norms for are convex).

- : Not convex, since its second derivative changes sign.

General thinking process when Verifying Convexity:

-

Definition Check: Confirm that for any two points and in the domain and any ,

This is the fundamental property of convex functions.

-

First-Order Condition: For differentiable functions, verify that the function always lies above its tangent lines. In other words, check that

holds for all and .

-

Second-Order Condition: For twice differentiable functions, check that the Hessian matrix is positive semidefinite everywhere in the domain. This guarantees that the curvature is non-negative throughout (and watch for points of non-differentiability).

-

Composition Rules: Many functions are built from simpler components. Convexity is preserved under operations such as:

-

Sum: If and are convex, then

is convex.

-

Scaling: If is convex and is a scalar, then

is convex.

-

Affine Transformation: If is convex and is an affine mapping, then

is convex.

-

Nondecreasing/Nonincreasing Composition:

If is convex and nondecreasing and is convex, thenis convex.

Similarly, if is convex and nonincreasing and is concave, then is convex. -

Pointwise Maximum: The pointwise maximum of convex functions is also convex. If are convex for , then

is convex.

-

Perspective Function: Given a convex function , its perspective

is convex on . This is particularly useful for scaling or normalization.

-

Elementwise Composition: When applies a convex (or concave) function elementwise to a vector , and aggregates those values via a convex operation (like a sum or a norm), the overall function remains convex under suitable conditions. For example, if is convex and yields a vector of convex transformations of , then can be convex.

-

-

Reformulation: If the convexity of the original function is not immediately clear, try to reformulate it as a combination of simpler convex components. This can often reveal the hidden structure.

-

Convex Relaxation: For non-convex functions, a common approach is to approximate them with a surrogate convex function that shares its desired properties. This method makes the problem easier to analyze and solve.

-

Additional Tools: When analytical methods are challenging, numerical verification or symbolic computation tools can provide further insights into the function’s behavior.

Often, just one of these checks is enough to show a function is convex, so pick the approach that fits your problem best. Together, these strategies give you a clear and practical toolkit for tackling even the toughest optimization challenges.

This concludes Part 1 of the series on convex optimization, where I covered the fundamentals, convexity criteria, and illustrative examples. To continue your exploration of this topic, you can check out Part 2: Duality, KKT Conditions, and Advanced Applications and Part 3: Convex Loss Functions for a deeper dive into these advanced concepts.

References & Further Reading

- Boyd, S., & Vandenberghe, L. (2004). Convex Optimization. Cambridge University Press.

- Hastie, T., Tibshirani, R., & Friedman, J. (2009). The Elements of Statistical Learning.

- Schölkopf, B., & Smola, A. (2001). Learning with Kernels. MIT Press.

- García-Gonzalo, E., et al. (2016). A Study on Kernel Methods for Classification.