Image credit: Huang et al. (2022)

In this blog series, we are exploring:

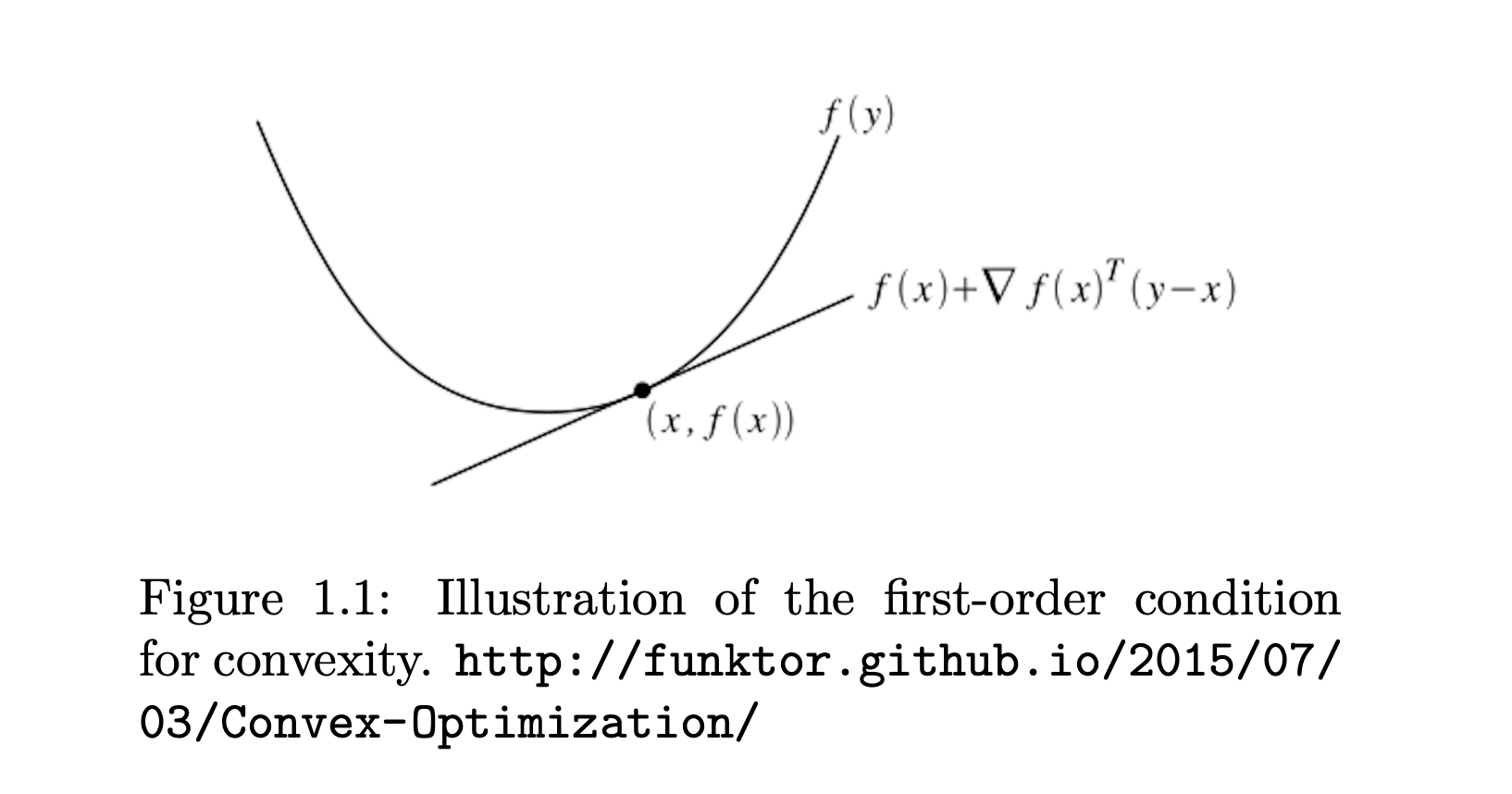

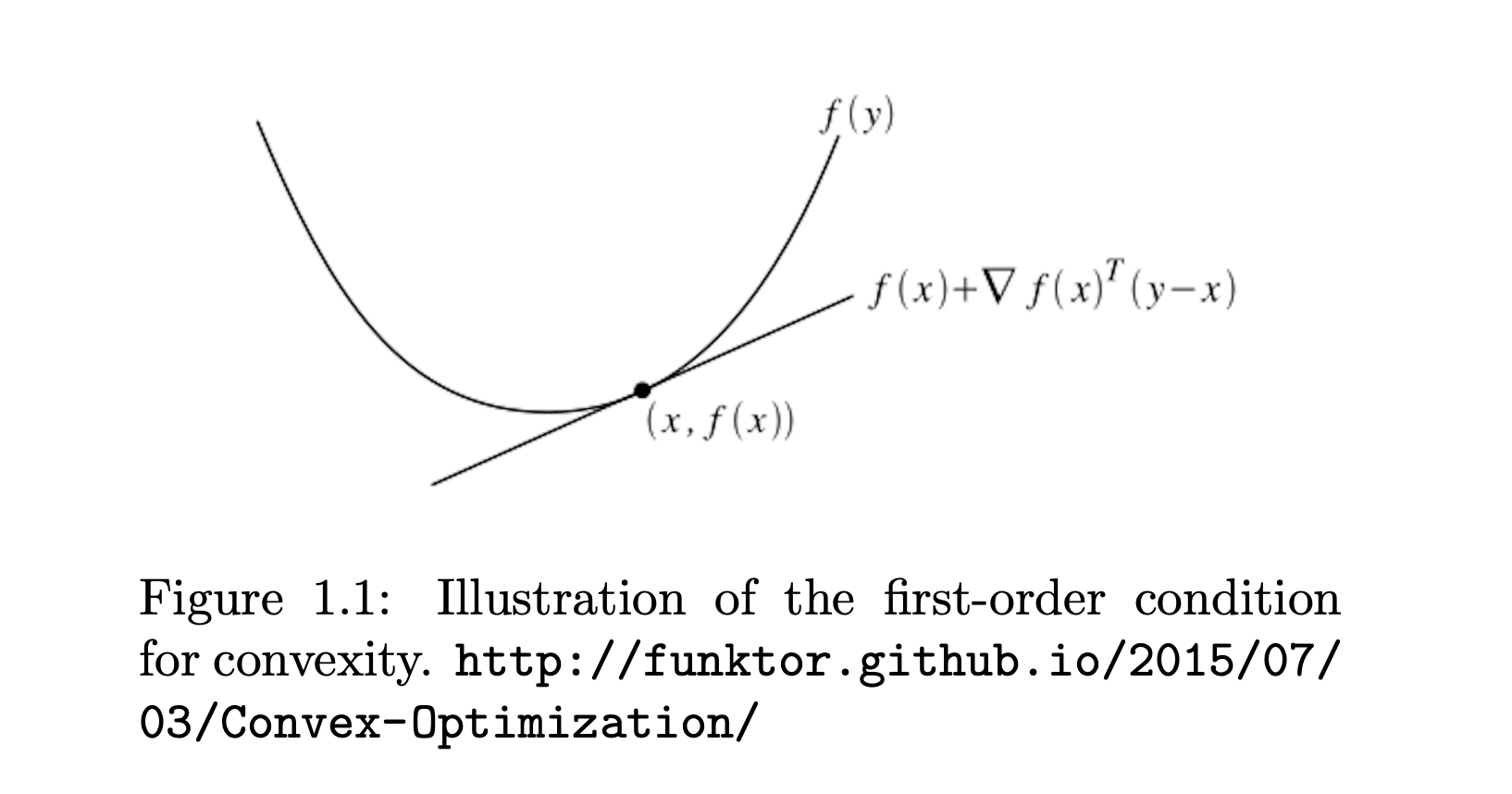

- How to determine if a function is convex.

- Properties and operations that preserve convexity.

- Standard forms of convex optimization problems.

- The Karush-Kuhn-Tucker (KKT) conditions: what they are and when they apply.

- Mathematical derivations, with an SVM-like example and various convex loss functions.

- A broad survey of commonly used convex functions in optimization.

Series Division:

- Part 1: Fundamentals, Convexity Criteria, and Examples.

- Part 2: Duality, KKT Conditions, and Advanced Applications.

- Part 3: Convex Loss Functions.

Building on the fundamentals and examples covered in Part 1, in this blog we dive deeper into the structural aspects of convex optimization.

Here, we examine the duality framework and the Karush-Kuhn-Tucker (KKT) conditions that not only bridge the primal and dual formulations but also provide the critical criteria for optimality.

We will be then exploring applications like the soft-margin SVM and a distributionally robust cross-entropy problem that shows how these theoretical tools are put into practice to solve optimization challenges.

3. Duality and Optimality Conditions in Convex Optimization.

Once we understand the convexity, the next step is to explore how these optimization problems can be viewed from different angles to deepen our understanding and improve our solution methods.

In many cases, we can express a problem in its original, or primal, form and derive a corresponding dual form that offers complementary insights or computational benefits.

At the heart of this discussion are the Karush-Kuhn-Tucker (KKT) conditions, which provide the necessary and, under certain conditions, sufficient criteria for finding the best solution (optimality) when there are constraints.

In this section, we'll dive into these ideas, discussing how the primal and dual formulations relate and how the KKT conditions help us confirm when we've reached an optimal solution.

3.1 Standard Form of a Convex Optimization Problem

A convex optimization problem typically takes the form:

x∈Rnminsubject tof(x)gi(x)≤0,i=1,…,m,hj(x)=0,j=1,…,p,

where f is a convex function, each gi is convex, and each hj is affine. If these conditions hold, and if there exists a feasible point that satisfies Slater's condition (strict feasibility), powerful results like strong duality often kick in.

3.2 Karush-Kuhn-Tucker (KKT) Conditions

The KKT conditions are necessary (and often sufficient) for optimality in convex optimization problems with inequality and equality constraints. Given a problem:

xminsubject tof(x)gi(x)≤0,i=1,…,m,hj(x)=0,j=1,…,p,

there exist Lagrange multipliers λi≥0 and νj such that at the solution x∗:

-

Stationarity:

∇f(x∗)+i=1∑mλi∗∇gi(x∗)+j=1∑pνj∗∇hj(x∗)=0.

-

Primal Feasibility:

gi(x∗)≤0,hj(x∗)=0.

-

Dual Feasibility:

λi∗≥0for all i.

-

Complementary Slackness:

λi∗gi(x∗)=0for all i.

If the problem is convex, these conditions are both necessary and sufficient for global optimality.

Application Case I: Soft-Margin SVM

Here, we work on Soft-Margin SVM's primal and dual forms using KKT conditions. The starting point is the primal formulation:

w,b,ξmin21∥w∥2+Ci=1∑nξi,

subject to margin and nonnegativity constraints:

yi(w⊤xi+b)≥1−ξi,ξi≥0for i=1,…,n.

Let αi≥0 and βi≥0 be the Lagrange multipliers. Form the Lagrangian:

L(w,b,ξ,α,β)=21∥w∥2+Ci=1∑nξi+i=1∑nαi[1−ξi−yi(w⊤xi+b)]−i=1∑nβiξi.

To find the dual, differentiate L with respect to the primal variables and set to zero:

-

Stationarity w.r.t w:

w−i=1∑nαiyixi=0⟹w=i=1∑nαiyixi.

-

Stationarity w.r.t b:

−i=1∑nαiyi=0⟹i=1∑nαiyi=0.

-

Stationarity w.r.t ξi:

C−αi−βi=0⟹βi=C−αi.

Since βi≥0, we get 0≤αi≤C.

We then substitute back into the Lagrangian, eliminating (w,b,ξ) to obtain the dual problem:

αmaxi=1∑nαi−21i,j=1∑nαiαjyiyj(xi⊤xj),

subject to 0≤αi≤C and ∑i=1nαiyi=0.

This exemplifies how the KKT conditions unify primal and dual formulations in a convex setting.

Application Case II: A Distributionally Robust Cross-Entropy Problem with Per-Sample Temperature

Consider a fixed data point (x,y), and define

sk=wk⊤x−wy⊤xfork=1,…,K.

Think of sk as the (shifted) logit score for class k. We introduce a distribution p=(p1,…,pK) over these K classes. Our primal problem is:

p∈RKmaxk=1∑Kpksk

subject to

- ∑k=1Kpk=1,

- ∑k=1Kpklog(pkK)≤ρ,

- pk≥0∀k.

In words:

- We want to maximize a linear function of p.

- p must lie in the probability simplex.

- We impose an entropic constraint ∑kpklog(pkK)≤ρ, which restricts how sharply peaked or uniform p can be.

Proving Convexity

Although the objective is a maximization of a linear function, we can equivalently view it as

p∈Fmin−k=1∑Kpksk,

where F is the feasible set:

- Probability simplex: pk≥0,∑k=1Kpk=1.

- Entropic constraint: ∑k=1Kpklog(pkK)≤ρ.

Linear functions are both convex and concave. The probability simplex is convex, and the set {p:∑kpklog(pkK)≤ρ} is convex because p↦∑kpklog(pk) is concave (its negative is convex). Thus, the intersection of convex sets is convex, making the overall formulation a convex optimization problem (when viewed in minimization form).

Strict Feasibility and Slater’s Condition

To use strong duality, we typically invoke Slater’s condition. We need a strictly feasible point that satisfies

- ∑k=1Kpk=1,

- pk>0 for all k,

- ∑k=1Kpklog(pkK)<ρ.

A simple choice is the uniform distribution pk=1/K. Then

k=1∑KK1log(K1⋅K)=k=1∑KK1log(1)=0.

If ρ>0, this strictly satisfies the constraint. Hence, Slater’s condition holds.

Forming the Lagrangian

Let α be the Lagrange multiplier for ∑kpk=1, and let β≥0 be the multiplier for the entropic constraint ∑kpklog(pkK)≤ρ. Define

Φ(p)=k=1∑Kpklog(pkK)−ρ≤0.

The Lagrangian is

L(p,α,β)=k=1∑Kpksk−α(k=1∑Kpk−1)−β(k=1∑Kpklog(pkK)−ρ).

Rewriting,

L(p,α,β)=k=1∑Kpksk−αk=1∑Kpk−βk=1∑Kpklog(pkK)+α+βρ.

Solving for p

The dual function d(α,β) is given by

d(α,β)=p≥0,∑kpk=1maxL(p,α,β).

Taking derivative w.r.t. pk and setting to zero:

∂pk∂L=sk−α−β[log(pkK)+1]=0.

Hence,

sk−α−βlog(pkK)−β=0,

implying

log(pkK)=β1(sk−α−β),pk=K1exp(βsk−α−β).

We must also enforce ∑k=1Kpk=1. That yields

k=1∑Kpk=k=1∑KK1exp(βsk−α−β)=1.

Solving for α in terms of β leads to

−βα+β=log(K)−log(k=1∑Kexp(sk/β)),

so

α=−βlog(K)+βlog(k=1∑Kesk/β)−β.

Substitute back to find the primal-optimal distribution:

pk∗(β)=K1exp(βsk)/[ℓ=1∑Kexp(βsℓ)].

Constructing the Dual Problem

We then substitute pk∗(β) into L to get the dual function d(α,β) (with α eliminated in favor of β). The entropic constraint ∑kpklog(pkK)≤ρ implies a complementary slackness condition:

β(k=1∑Kpk∗(β)log(pk∗(β)K)−ρ)=0.

In other words,

- If ∑kpk∗(β)log(pk∗(β)K)<ρ, then β=0.

- If ∑kpk∗(β)log(pk∗(β)K)=ρ, then β>0.

Hence, the dual problem is typically a single-dimensional minimization w.r.t. β≥0, where we check whether the constraint is active. Once β∗ is found, we get pk∗ by the expression above.

Primal Solution from Optimal Dual Variables

Once the optimal β∗ is determined (depending on whether the entropic constraint is binding), the final solution is

pk∗=K∑ℓ=1Kexp(β∗sℓ)exp(β∗sk).

This has the flavor of a softmax distribution with an effective temperature 1/β∗. If the constraint is active, it sets the distribution’s entropy to a specific level.

Interpretation

- Learning individual τ: In knowledge distillation, one might want a separate temperature for each sample. The above derivation shows how 1/β∗ can play that role.

- Distributional robustness: The entropic constraint limits how peaked pk can be, thus mitigating the impact of uncertain labels or outliers.

- Entropy regularization view: ∑kpklogpk is (negative) entropy, so adding or constraining it aligns with approaches like KL divergence regularization.

Conclusion:

This part has demonstrated how duality and the KKT conditions serve as powerful instruments for unraveling and solving convex optimization problems,

with practical applications that highlight their utility in machine learning and beyond.

To continue your journey through this comprehensive guide, you can next proceed to Part 3: Convex Loss Functions, where we explore various loss functions observed in optimization frameworks.

References & Further Reading

- Boyd, S., & Vandenberghe, L. (2004). Convex Optimization. Cambridge University Press.

- Hastie, T., Tibshirani, R., & Friedman, J. (2009). The Elements of Statistical Learning.

- Schölkopf, B., & Smola, A. (2001). Learning with Kernels. MIT Press.

- García-Gonzalo, E., et al. (2016). A Study on Kernel Methods for Classification.